News

From the end of September 2023 to March 2024, a series of annual reports from regulatory bodies have been tabled in the Queensland Parliament.

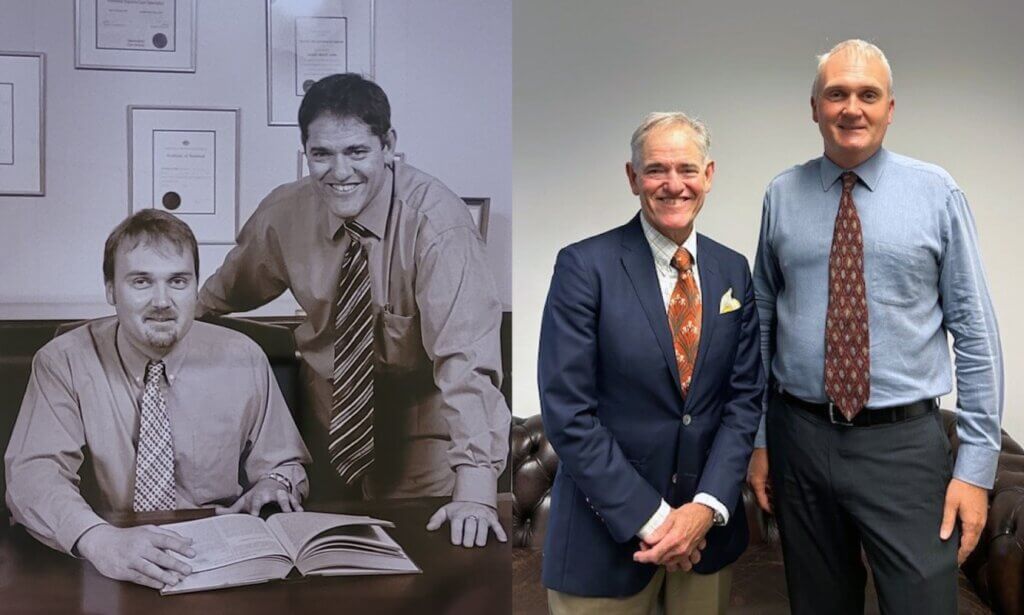

Morton & Morton marks 150 years in the legal profession this month.

The LGBTI Legal Service will combine a human rights seminar with drag bingo for a CPD event on Friday.

A recidivist sex offender has been detained indefinitely due to a "farcical" lack of community accommodation.

Newly admitted lawyer Jennifer Millers was always interested in a community-oriented career but wasn't sure which path to take when finishing high school.

Profession updates

BCCM Act reforms start 1 May

17 April 2024

PD on expert evidence issued

15 April 2024

PD: Direct Access Briefs

10 April 2024

PD regarding QCase implementation

8 April 2024

Latest

- Family law

- Case notes

- First nations

- Access to justice

- Family law

- Early career lawyers

Features

Celebrating our members

QLS celebrates our loyal members who have contributed a remarkable 25 and 50 years to the Society and the profession.

Excellence in Law Awards

Meet this year’s QLS Excellence in Law Award winners. Read about their achievements and we showcase photos from the 2023 event.

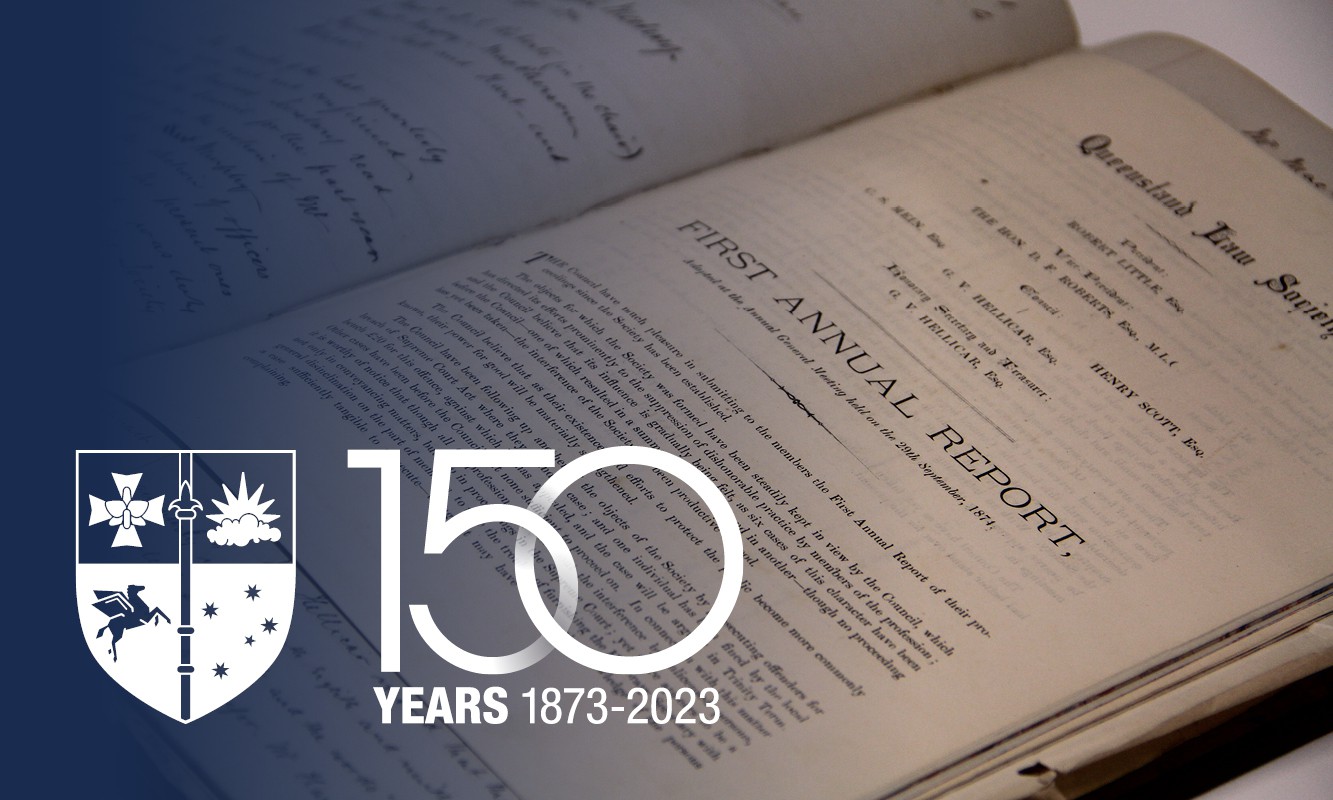

150 years: Special series

This year, QLS is celebrating the 150-year anniversary since the first law society in Queensland with a series of feature articles.

Perspectives

- First nations

The UQ/Prisoners' Legal Service Deaths in Custody Project was established in 2016. It is led by law students and supervised by Professor Tamara Walsh.

- Perspectives

Mediate early and more than once if you’re not successful the first time.

Toby Boys

- First nations, News

Addressing inequity is what inspired Jazmin to study law. Today we mark Close the Gap Day.

Careers

In practice

- Family law

Henschel & Sartre (No. 3) [2023] FedCFamC1F 1081

Keleigh Robinson, Craig Nicol

- Access to justice

Legal Aid Queensland shares lessons about how lawyers can best help after a disaster.

People

- Early career lawyers, News

Newly admitted lawyer Jennifer Millers was always interested in a community-oriented career but wasn't sure which path to take when finishing high school.

Natalie Gauld

- Early career lawyers

Last month's moot had teams arguing about the validity and infringement of a sneaker midsole patent and the unregistered design right of its lacing system.

- Early career lawyers, News

The successful candidates were asked how they felt about being elected by their peers and their hopes for the committee for the next two years.

Natalie Gauld